Neural networks, inspired by the structure and function of the human brain, are at the heart of many modern Artificial Intelligence (AI) applications. From image recognition and natural language processing to self-driving cars and personalized recommendations, neural networks are revolutionizing the way we interact with technology. This article provides a comprehensive overview of neural network architecture and functionality.

What are Neural Networks?

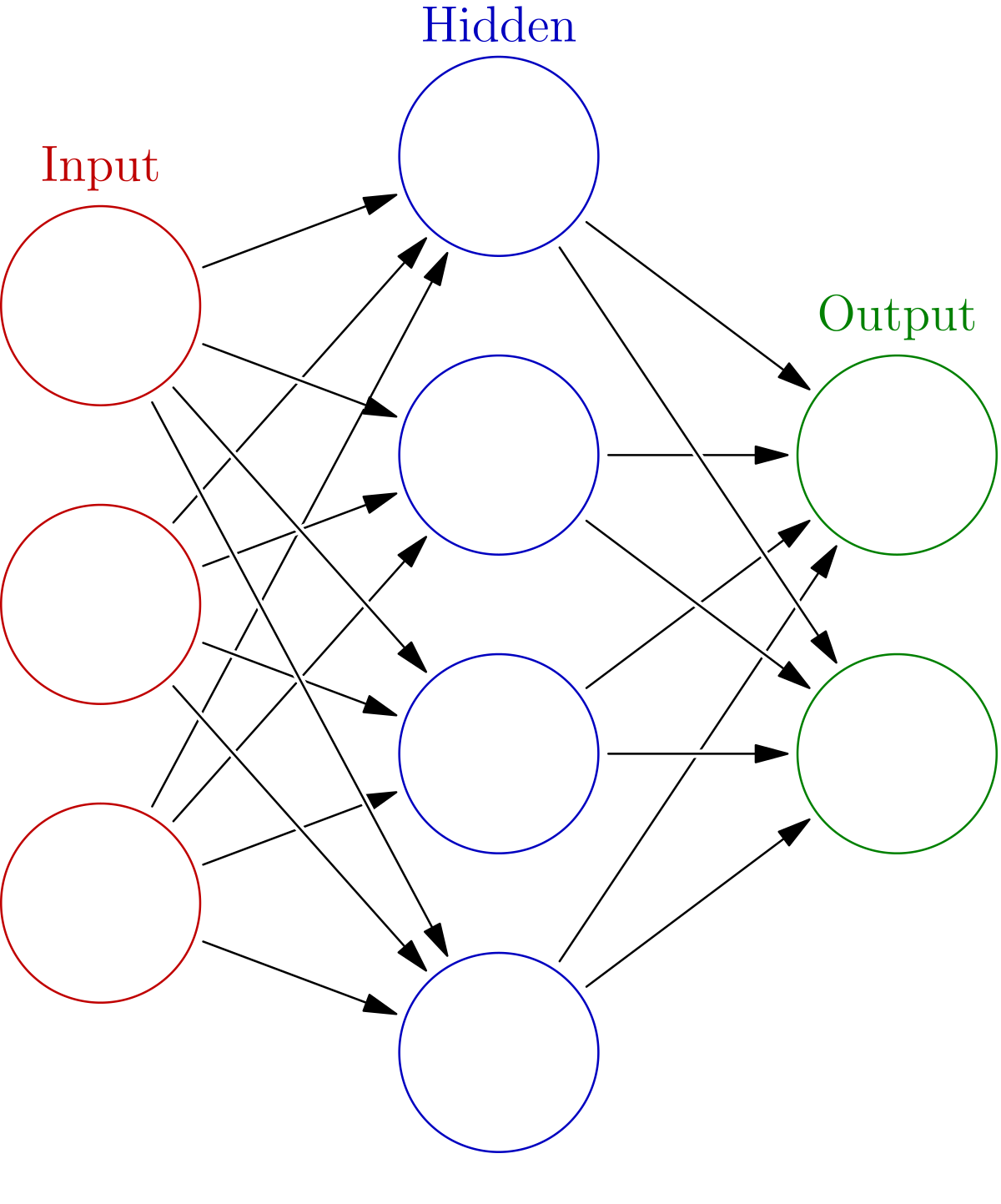

At their core, neural networks are computational models that consist of interconnected nodes, or “neurons,” organized in layers. These neurons process and transmit information, learning complex patterns from data. The strength of the connections between neurons, known as “weights,” are adjusted during the learning process to improve the network’s accuracy.

A simplified representation of a neural network with input, hidden, and output layers.

Key Components of a Neural Network

- Neurons (Nodes): The basic building blocks of a neural network. Each neuron receives inputs, processes them, and produces an output.

- Weights: Numerical values that represent the strength of the connection between neurons. Higher weights indicate a stronger influence.

- Biases: Values added to the weighted sum of inputs to shift the activation function’s output. Biases help the network learn even when the input is zero.

- Activation Functions: Mathematical functions applied to the weighted sum of inputs (plus bias) to determine the neuron’s output. Common examples include sigmoid, ReLU (Rectified Linear Unit), and tanh (hyperbolic tangent).

- Layers: Neurons are organized into layers. Typical neural networks consist of:

- Input Layer: Receives the initial data.

- Hidden Layers: Process the input and extract features. Neural networks can have multiple hidden layers (deep learning).

- Output Layer: Produces the final result or prediction.

How Neural Networks Function: The Learning Process

Neural networks learn through a process called “training.” This involves feeding the network large amounts of data and adjusting the weights and biases to minimize the difference between the network’s predictions and the actual values (the “loss”).

The training process typically involves these steps:

- Forward Propagation: Input data is fed forward through the network, layer by layer, with each neuron performing its calculations.

- Loss Calculation: The network’s output is compared to the desired output, and a loss function is used to quantify the error. Common loss functions include Mean Squared Error (MSE) and Cross-Entropy.

- Backpropagation: The error is propagated backward through the network, calculating the gradient of the loss function with respect to each weight and bias.

- Optimization: An optimization algorithm (e.g., Gradient Descent, Adam) uses the gradients to update the weights and biases, aiming to reduce the loss.

- Iteration: The process is repeated for many iterations (epochs) until the network achieves a satisfactory level of accuracy.

Here’s a simplified example illustrating the concept of forward propagation with a single neuron:

# Input values

input1 = 0.5

input2 = 0.8

# Weights

weight1 = 0.7

weight2 = 0.3

# Bias

bias = 0.1

# Weighted sum of inputs

weighted_sum = (input1 * weight1) + (input2 * weight2) + bias

# Activation function (sigmoid)

def sigmoid(x):

return 1 / (1 + (2.71828 ** -x))

# Output of the neuron

output = sigmoid(weighted_sum)

print(output) # Output: Approximately 0.668

Types of Neural Networks

There are many different types of neural networks, each designed for specific tasks and data types. Some of the most common types include:

- Feedforward Neural Networks (FFNN): The simplest type, where data flows in one direction, from input to output.

- Convolutional Neural Networks (CNN): Excellent for image and video processing, using convolutional layers to extract features.

- Recurrent Neural Networks (RNN): Designed for sequential data, like text and time series, with feedback connections that allow them to maintain memory of past inputs.

- Long Short-Term Memory (LSTM) Networks: A type of RNN that addresses the vanishing gradient problem, enabling them to learn long-range dependencies in sequential data.

- Generative Adversarial Networks (GANs): Composed of two networks, a generator and a discriminator, that compete against each other to generate realistic data samples.

Applications of Neural Networks

Neural networks are used in a wide range of applications, including:

- Image Recognition: Identifying objects, faces, and scenes in images.

- Natural Language Processing (NLP): Understanding and generating human language, powering chatbots, machine translation, and sentiment analysis.

- Speech Recognition: Converting spoken language into text.

- Recommendation Systems: Suggesting products, movies, and other items based on user preferences.

- Fraud Detection: Identifying fraudulent transactions.

- Medical Diagnosis: Assisting in the diagnosis of diseases based on medical images and patient data.

- Self-Driving Cars: Enabling vehicles to perceive their surroundings and navigate autonomously.

Conclusion

Neural networks are powerful tools for solving complex problems in various fields. Understanding their architecture and functionality is crucial for anyone interested in AI and machine learning. While this article provides a foundational overview, continuous learning and exploration are essential to stay abreast of the rapidly evolving field of neural networks.